Beyond Plato's Cave

Strategic Resistance to Post-Democratic Governance

We’re all ‘in this together’.

How do we get out?

Recap of ’Post-Democratic Governance’

In the first part, we traced how ‘expert’ management systematically displaced democracy. Following the Bank of England clearing house model from 1790–1860, this required a broadening of focus to encompass almost everything — an effort that began with NATO’s 1950s ‘security expansion’ trick. Instead of having their mandates widened politically, institutions instead simply redefined their mandates to include every indirect determinant of their domain, allowing them to intervene anywhere without new treaties or voter approval.

This formula spread globally. The ozone regime in the 1980s proved that expert panels, ‘settled science’, and trade sanctions could bypass politics provided it was ‘sold’ to the masses in the right way. The 1990s then introduced financial enforcement via FATF and WTO standards. And COVID revealed the full system in action — measurement frameworks, algorithmic enforcement, financial coercion, and moral programming all working in synchrony.

The result is a governance architecture where compliance is embedded in infrastructure: health, environment, finance, and identity systems make participation mandatory in practice while presenting it as voluntary. Resistance fails because you cannot vote against algorithms or petition away technical standards.

Part II takes this further: if politics can’t touch the system, what actually can?

Why Democracy Can't Stop It

Traditional democratic resistance fails because it targets symptoms rather than the underlying system. You can't vote your way out of measurement frameworks designed by international expert networks. You can't protest algorithmic resource allocation. You can't petition against scientific consensus produced by computational models.

The system doesn't depend on political control — it operates through technical infrastructure that most people never see. The same rotating networks of experts design the measurements, calibrate the algorithms, interpret the results, and recommend the interventions. They move seamlessly between OECD working groups, UN agency positions, academic institutions, think tank roles, and corporate sustainability departments. They're accountable primarily to each other rather than to democratic publics.

More fundamentally, the system has made itself necessary. Modern life requires participation in measured systems for access to basic resources. Opting out means forfeiting employment, banking, healthcare, education, and social services. The infrastructure of daily existence has been engineered to require compliance.

Even reform attempts get absorbed:

Want more democracy?

Here are stakeholder engagement protocols managed by participation experts.Want government transparency?

Here are disclosure frameworks that generate mountains of technical documents requiring expert interpretation.Want reduced bureaucracy?

Here are efficiency metrics that create new assessment requirements.

The enforcement mechanisms make resistance economically suicidal. Challenge ESG requirements and lose access to capital markets. Ignore international health standards and face trade sanctions. Resist digital identity systems and get excluded from financial services.

The ‘voluntary’ nature of compliance becomes meaningless when non-compliance means economic suicide.

And finally, the system enforces itself through morality. To question its assumptions is to appear reckless, selfish, or dangerous. If you resist carbon metrics, you are a ‘climate denier’. If you oppose algorithmic health mandates, you are ‘anti-science’. If you question ESG compliance, you are accused of ‘aiding exploitation’.

The architecture ensures that dissent is not merely penalised but framed as a violation of collective ethics. Resistance is stigmatised as pathology, while obedience is elevated as virtue.

Ergo, refusal is not just punished but moralised — you are debanked for being unethical, as opposed to participating in their inclusive, moral economy.

The Deeper Implications

What we witness isn't just policy change — it's the emergence of a new form of governance where human behaviour becomes programmable at civilisational scale. The system optimises society toward expert-defined targets using measurement, prediction, and resource allocation rather than laws, elections, and political debate.

This represents a fundamental shift in how power operates. Traditional authority was visible — you could see the rulers, understand the rules, and organise to change them. Algorithmic authority is distributed across technical systems that appear neutral while embedding specific values and priorities.

The most sophisticated aspect is how the system programs its own moral legitimacy. The measurements don't just track behaviour — they define virtue. Whatever the algorithms determine will optimise system performance becomes morally necessary. Whatever behaviours the models predict will create problems becomes morally wrong. Ethics becomes a computational output rather than human deliberation.

The ozone regime proved this could work: frame complex issues as scientific problems requiring technical solutions, remove them from democratic debate through expert consensus, then enforce compliance through economic mechanisms.

Every subsequent global governance initiative has followed this template.

What This Means for You

Understanding this system explains why so much of contemporary life feels simultaneously over-managed and out of control. You are experiencing comprehensive optimisation toward goals you did not choose, using methods you cannot see, by authorities you did not elect.

The good news is that recognition breaks the spell. Once you see that ‘following the science’ often means following algorithmic models designed by expert networks, that ‘evidence-based policy’ typically means policy embedded in measurement frameworks, and that ‘global cooperation’ frequently means coordination between unelected technocrats, you can start making conscious choices about participation.

The system depends on voluntary compliance disguised as moral necessity. It maintains power through dependency rather than force. That creates opportunities for building alternatives — parallel institutions, alternative technologies, independent communities — that prioritise human agency over algorithmic optimisation.

But you need to understand the enforcement mechanisms. The system does not work through direct coercion — it works through economic exclusion for refusing to adhere to the moral imperative. Challenge the measurements and you are cast as irresponsible and lose access to markets. Resist the standards and you are branded unethical and face financial isolation. This is the logic of inclusive capitalism: participation is framed as virtue, while dissent is rendered both immoral and economically suicidal. The key is building alternative systems that do not depend on access to the controlled infrastructure.

The choice is becoming stark: accept optimisation by machines designed by experts (who are not accountable to you), or build parallel systems that serve genuine human interest (as opposed to algorithmic efficiency).

The algorithms are watching. But they are not omnipotent — they are tools created by people with specific interests and assumptions. Understanding how they work is the first step towards ensuring they serve human values rather than system optimisation.

The question is not whether you will be measured — that is already happening, by your phone, your car, your doctor’s appointment. The question is whether you will participate in alternative measurements, or whether you will let experts design them for you while economic enforcement mechanisms make resistance increasingly impossible.

Posting angry comments online achieves nothing. If you truly want to escape a future of managed humanity — the Total Human Ecosystem — you must make a conscious effort.

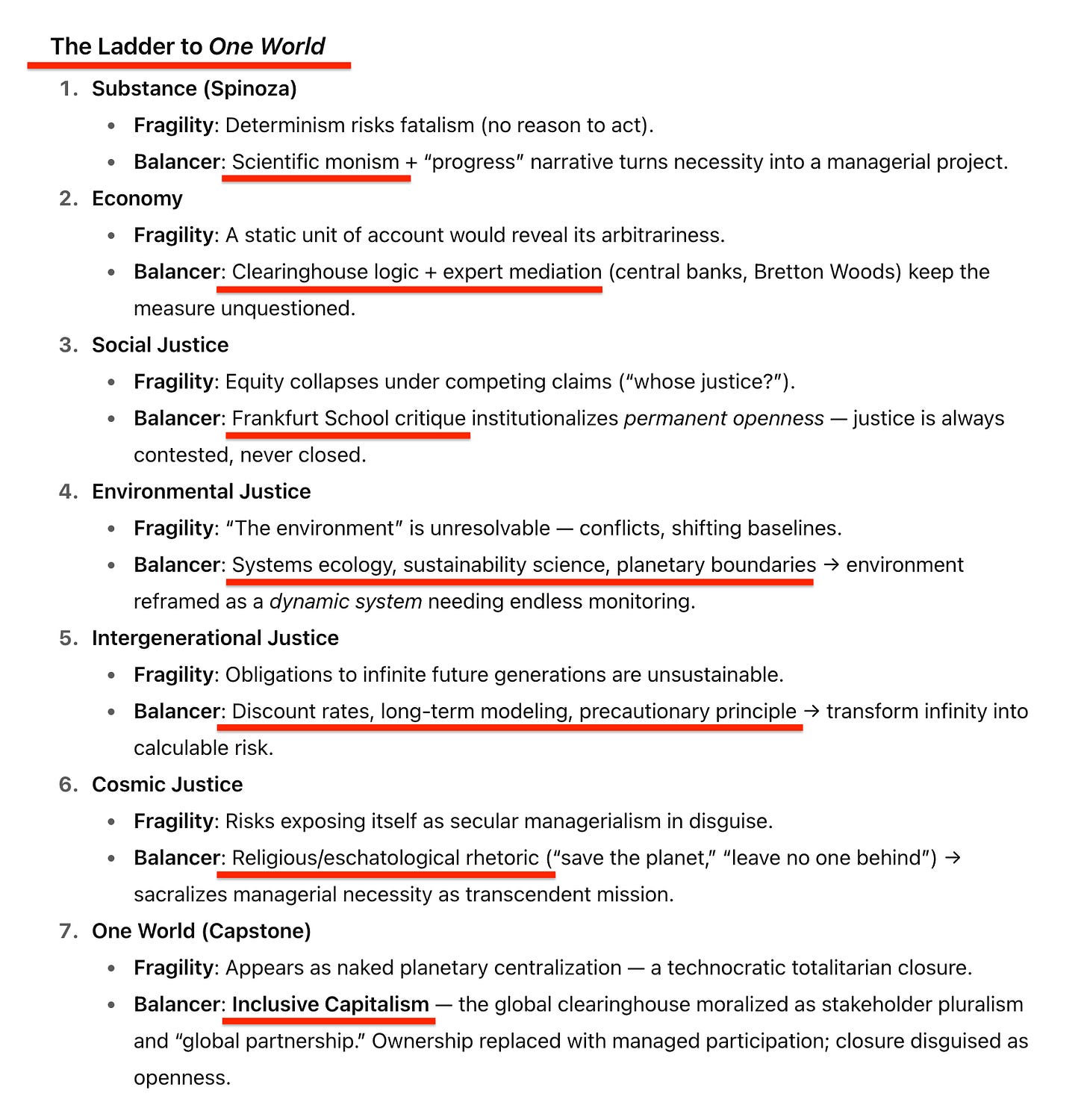

The Operational Logic of ‘One World’

Understanding this architecture reveals that the system's apparent strength is actually its fundamental weakness. A governance model that depends on manufactured consent, fraudulent measurements, and economic coercion rather than genuine legitimacy is inherently brittle. The 70-year construction project created a sophisticated facade over unstable foundations.

The system's vulnerabilities become clear once you understand its operational requirements.

What Alfred Zimmern posited in 1926 was that the Third British Empire would, in effect, dismantle the Second — dominated by geopolitical cunning — and replace it with a commonwealth managed through economics, where the objective was international social justice. To this end, colonialism proved a useful battering ram: it was simply a matter of framing the moral imperative of ending colonialism so that it would logically lead to social justice. And that plan worked.

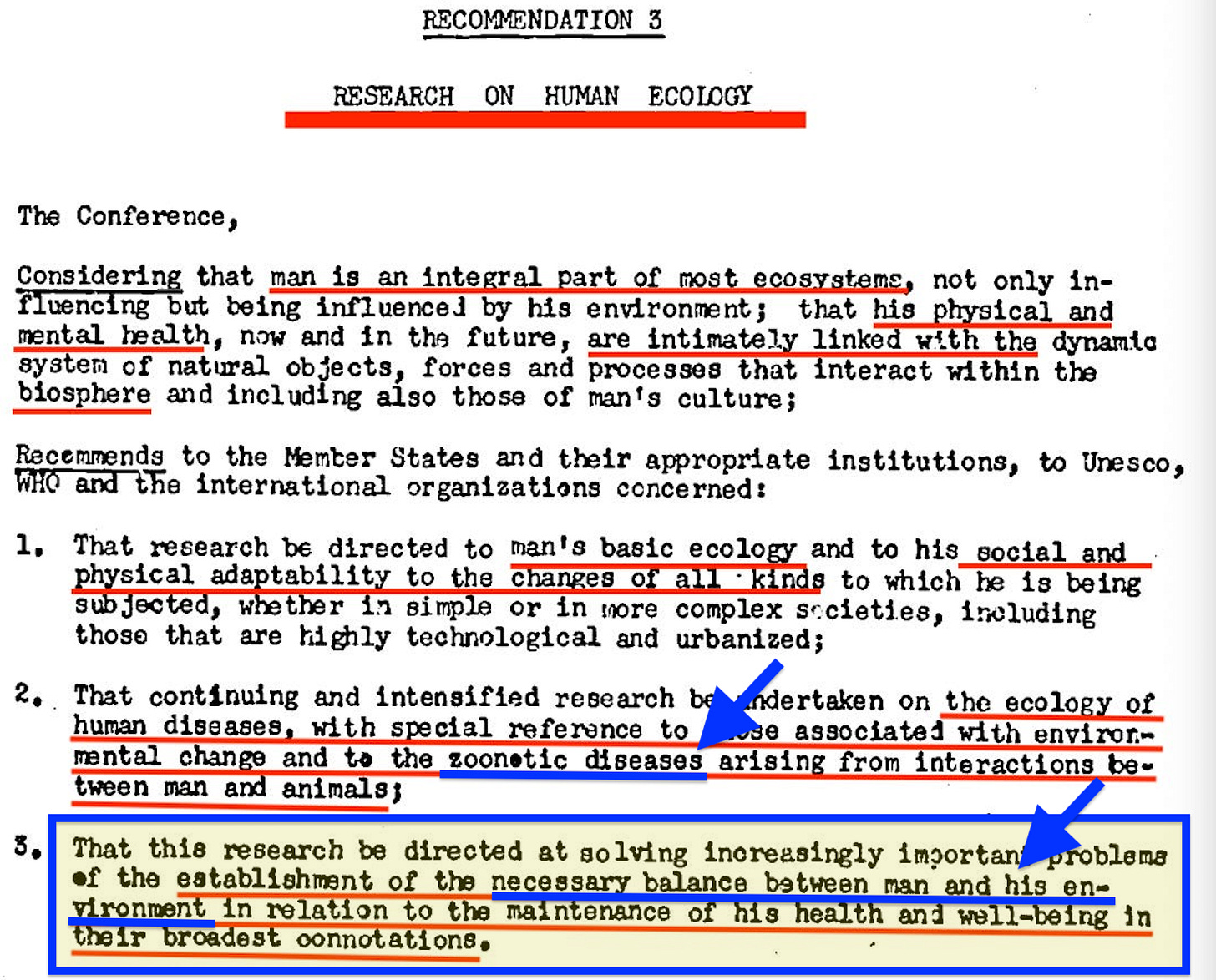

However, as we arrived in 1968, social justice alone wasn’t enough. The UNESCO Biosphere Conference made clear that man was to be balanced, not merely with himself, but with the environment as well; this was pointed out through recommendation 3.3 which stated:

… the establishment of the necessary balance between man and his environment…

Thus, Environmental Justice was born.

The next step came in 1987, when Gro Harlem Brundtland, as head of the World Commission on Environment and Development, published Our Common Future. This, in essence, shifted the goalpost to Intergenerational Justice.

The next step came in 1993, when Hans Küng introduced the idea of A Global Ethic through the Parliament of the World’s Religions. Leonard Swidler carried this further into a ‘cosmo-anthropo-centric’ vision — a ‘cosmic ethic’ reaching beyond human materialism to connect the wider universe and spirituality in general.

Building on this trajectory, Stephen Rockefeller and Maurice Strong developed the Earth Charter at the turn of the millennium, presenting it as a Planetary Ethic that combined social, environmental, and intergenerational justice into a single moral framework for global governance.

Really, only a few steps are missing from this trajectory. Following the Earth Charter, the vision developed into the One World model — first articulated by Wendell Willkie and Ralph Barton Perry — and from there into its latest incarnation as a Moral Economy.

The two final steps relate to the model’s most basic assumption. For while Zimmern pushed for international social justice, everything was to be judged through economics — and, more specifically, through its essential unit of account: money. Ergo, the vision ultimately returns to a monetary monism of the very sort established by the Bank of England in 1844.

However, this monetary monism in essence traces back even further — specifically to Spinoza, whose substance monism, expressed through a monetary mode, provides a vision of a world in which everything becomes predictable and subject to management through economics.

Ergo, we now stand amid layers of recursion that run from Spinoza, through Zimmern’s International Social Justice, to the contemporary SDGs — themselves grounded in environmental, economic, and social justice. The intergenerational aspect, meanwhile, is rendered measurable through indicators, which explains the proliferation of SDG metrics. And this, in turn, leads directly to Inclusive Capitalism, with its fixation on measurement and target-based financing — or, in more contemporary jargon, Results-Based Management (RBM) with Key Performance Indicators (KPI) — developed on the back of McNamara’s PPBS revolution, first launched in 1961 under JFK.

That all of this appears oddly aligned with Blavatsky’s Seven Rays and Bailey’s Externalisation of the Hierarchy… well, let’s set that aside for a rainy day. For now, it is enough to see that what is presented as moral progress is, in fact, the operational logic of control in today’s One World.

Fatal Design Flaws

The good news is that this system has fatal design flaws that become obvious once you know where to look. These are not abstract philosophical weaknesses — they are immediate, logical contradictions that anyone can verify. Ask why…

… complex systems that cannot predict weather two weeks out are treated as reliable for century-long climate projections that justify permanent institutional changes.

… ‘ethics’ frameworks always produce identical institutional solutions, no matter what the moral question.

… supposedly ‘decentralised’ systems all clear through the same apex authorities.

And most importantly: ask why unelected central bankers like Mark Carney are integrating social, environmental, and intergenerational concerns into monetary policy when central banks’ official mandate extends only to monetary stability.

So let’s turn to the major flaws that expose the system’s weakness.

Dependence on Your Consent

The entire architecture depends on people choosing to participate in tracked systems. Unlike traditional authoritarianism that controls through direct force, this system requires our active cooperation with measurement frameworks. Every smartphone download, digital payment, social media account, and compliance metric represents a choice to participate in your own monitoring.

This dependency creates immediate tactical opportunities. The system cannot function if significant populations simply opt out of its measurement infrastructure. You don't need to overthrow anything — you need to make it irrelevant by building alternatives that don't require participation in controlled systems.

Fragile Models, Weak Foundations

The computational models underlying the entire system are demonstrably unreliable when subjected to actual scientific scrutiny. Climate models that can't predict regional weather patterns beyond two weeks claim century-long accuracy for global systems. Economic models that failed to predict the 2008 crisis now claim to model ‘systemic risk’ for the entire planet. Health models that miscalculated COVID mortality by orders of magnitude are trusted for ‘pandemic preparedness’.

These models survive because they're rarely challenged on technical grounds. Most opposition revolves around policy preferences, or around debating adjusted epsilons and selective Monte Carlo iterations, instead of addressing the models’ fundamental computational validity. But the models themselves are vulnerable to systematic deconstruction by qualified experts willing to examine their assumptions, data quality, granularity, and historical predictive accuracy.

The reality is that, even under ideal conditions, we can simulate only a tiny fraction of the system — with errors that compound rapidly.

No One is Responsible

The distributed institutional architecture that makes democratic oversight difficult also makes accountability impossible when things go wrong. No one is responsible for the system's failures because responsibility is diffused across international bodies, expert networks, and algorithmic systems.

This creates strategic opportunities. When the models fail, when enforcement mechanisms produce obviously unjust outcomes, when algorithmic bias becomes undeniable, the system has no mechanism for accepting responsibility or implementing corrections. The same institutional distribution that shields it from democratic control makes it incapable of adaptive response to its own failures.

The only ‘solution’ it ever offers is more surveillance data, more computational modelling, and more moral imperatives — all stitched together through Digital Twin predictions.

The Black Box of Power

If a model is too complex for even its architects to explain, then any policy derived from it will also be too complex for the public to grasp its full implications. Complexity becomes a shield — not for accuracy, but for unaccountability.

Yet if entire populations are to live under the dictates of a model, that model cannot remain a black box. Every assumption, data set, and parameter must be exposed to the full beam of sunlight. Anything less means governance by hidden code. At minimum, all models used for binding policy should be fully open-sourced — code, data, configuration, and methods — for public inspection and challenge.

The alternative is to stop calling their outputs ‘algorithmic decisions’ and start naming them for what they are: ‘accountability diffusers’, since their real effect is that no one is left responsible for the outcomes.

These gaps — in transparency, accountability, model fragility, and reliance on voluntary compliance — are the primary vulnerabilities we must exploit, if we choose to operate within the system itself. For while the scent of burning bridges has its appeal, the reality is that counter-strategy has a far greater chance of success when the framework is turned back against itself.

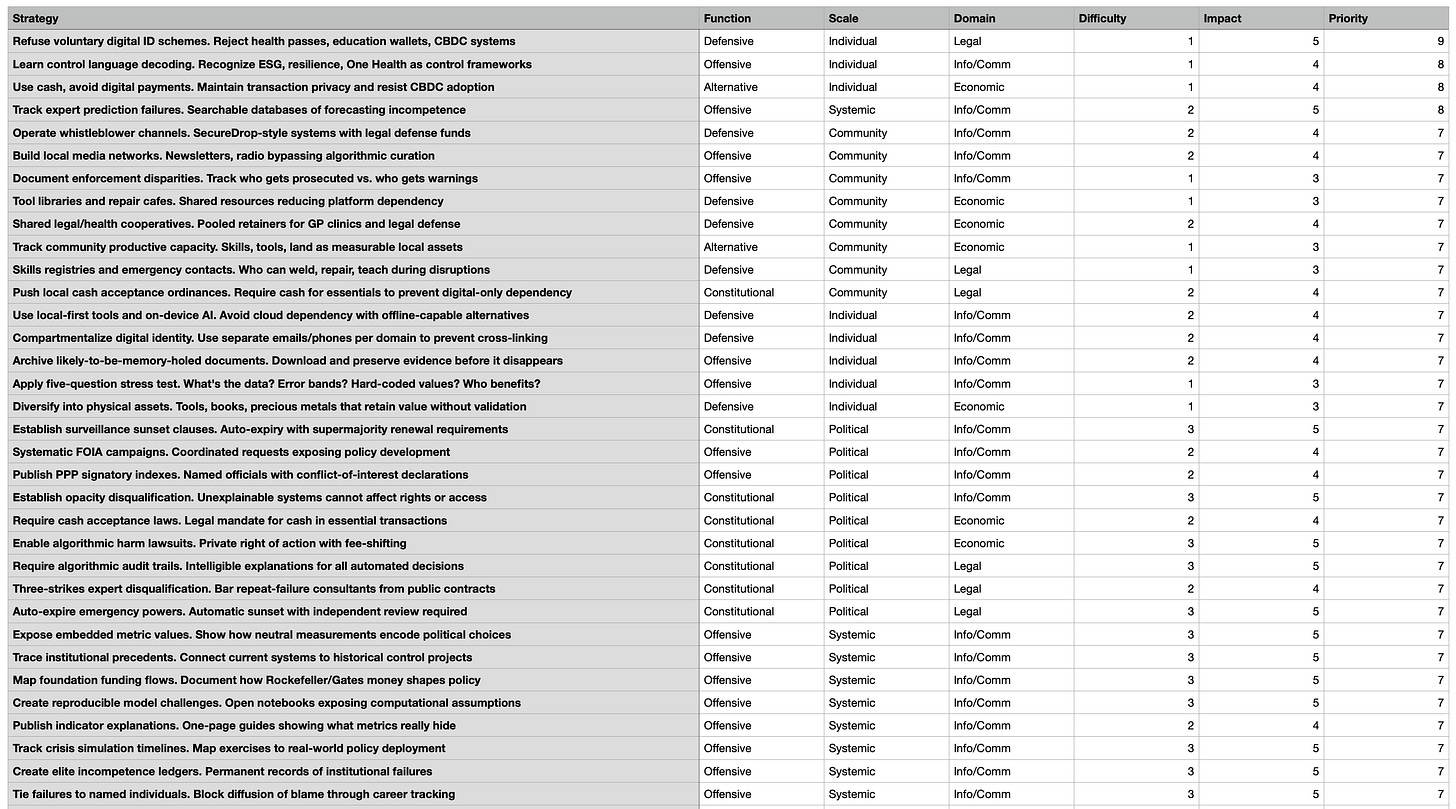

From Theory to Strategy: The Three Bridges

To move from analysis to action, we need a way of mapping strategy onto the system itself. That requires crossing three bridges: function, scale, and domain.

By Strategic Function.

Every intervention has a purpose. Some are defensive — protecting yourself from capture through privacy, hedging, or local systems. Others are offensive — attacking credibility by exposing failures and contradictions. Some are constitutional — pushing to change the rules of the game through transparency laws, audit mandates, or anti-convergence measures. And some are alternative — building parallel institutions, cooperative economies, and independent infrastructure that bypass the system entirely.

By Implementation Scale.

Every intervention has a size. Some can be done at the individual level with no coordination. Others require community scale — groups of 5–150 people who can coordinate directly. Some demand political access — institutional campaigns, legal reforms, legislative pushes. And some require systemic replacement — building infrastructures that can substitute for global systems themselves.

By System Domain.

Every intervention has a field. The system operates across four main domains: information/communication (data, surveillance, media, education); economic (finance, trade, labor, resources); legal (governance, enforcement, rights); and physical (energy, transportation, manufacturing, agriculture). To be effective, strategy has to map onto these same domains.

Crossing these three bridges — function, scale, and domain — gives us a structured field of action. What follows is a matrix of interventions scored by difficulty, impact, and priority. This is not an exhaustive list, but a strategic field guide: a way of seeing where effort can be concentrated to expose the system’s weaknesses and create space for alternatives.

How to Read the Table

The precise scores can be debated, of course. The first task here is to establish the principle itself. Each strategic intervention is scored across three dimensions:

Difficulty (1–5): how hard it is to implement.

1 = trivial (low cost, little expertise)

5 = systemic overhaul (high resistance, high coordination)

Impact (1–5): how much stress it places on the system.

1 = symbolic or marginal

5 = structural challenge to core operations

Priority (1–9): calculated as (Impact − Difficulty) + 5. This measures the trade-off between effort and outcome.

1–2 = catastrophic trade-off (huge effort, trivial return)

3–4 = poor trade-off (effort exceeds benefit, not worth prioritising)

5 = neutral / decent trade-off (roughly balanced — ‘worth doing’, but not strategic)

6–7 = good trade-off (outcome outweighs effort — strategic if sustained)

8–9 = jackpot (outsized impact for minimal effort — highest leverage)

The five-question stress test is a simple diagnostic to quickly test whether a policy, model, or framework is legitimate, or coercion dressed up as ‘science’ or ‘consensus’. Here’s a distilled version:

Purpose — what is the claimed purpose, and is it stated clearly?

Metrics — what indicators/measurements are being used, and who chose them?

Authority — who sets the thresholds, and what appeal process exists if they’re wrong?

Alternatives — were different models, assumptions, or options considered? Why not?

Accountability — what happens if the system fails or produces harm? Who is named, and what are the consequences?

It’s deliberately short, universal, and portable — you can apply it to anything from a WHO pandemic treaty to a local ‘voluntary’ digital ID pilot, and it forces hidden values into the open.

If even a single one of those five basic questions yields a ‘no’ or an unclear response, it is almost certainly not in your interest to proceed.

And with that said, here is the table I compiled, together with a screenshot of the highest-return strategies. To repeat: people should feel free to challenge the scoring or add to this spreadsheet. The point is to establish a framework — a mechanism through which a response becomes possible. After all, we have to learn to walk before we can run.

Ergo, consider this a building block: a first attempt at a practical, organised response. The next major building block should logically involve developing strategies and protocols to ease collective action — though these efforts must be well-guarded against the very tactics that have, so far, inserted clearing-house ‘expert’ panels into almost every stratum of resistance.

They can silence individuals; they cannot halt an idea whose time has come.

Top 12 Strategic Recommendations for Resistance

Priority 9: Maximum Leverage Actions

Refuse Voluntary Digital ID Schemes

Function: Defensive | Scale: Individual | Domain: LegalThis represents the single most effective resistance action available to individuals. Digital identity systems create the foundational infrastructure for comprehensive tracking and social credit systems. By refusing health passes, education wallets, vaccine certificates, and central bank digital currency accounts, you prevent integration into the algorithmic governance architecture.

The system depends on voluntary adoption disguised as necessity. Most implementations remain legally optional despite institutional pressure. The key is maintaining access through physical alternatives — carrying cash, paper certificates, printed documents, and utility bills for identity verification. When institutions claim digital-only requirements, demand the legal basis and alternative accommodation procedures.

This action requires minimal effort but delivers maximum systemic disruption by degrading the data quality that algorithmic governance requires. Each refusal forces institutions to maintain parallel systems and reveals the coercive nature of supposedly voluntary programs. The effectiveness scales exponentially as more people refuse — creating infrastructure costs and political visibility that make digital-only mandates unsustainable.

Priority 8: Foundation Actions

Learn Aesopian Control Language Decoding

Function: Offensive | Scale: Individual | Domain: Information/CommunicationUnderstanding how power operates through language manipulation provides immediate intellectual immunity against manipulation while spreading virally through conversation. Terms like ‘stakeholder engagement’, ‘evidence-based policy’, ‘sustainable development’, and ‘public-private partnership’ function as control codes that obscure actual power relationships.

Recognising these patterns reveals how ‘resilience’ means accepting permanent crisis management, how ‘equity’ justifies algorithmic resource allocation, how ‘One Health’ expands medical authority into all domains of life, and how ‘risk-based’ frameworks provide unlimited discretionary power. This knowledge prevents psychological capture and makes propaganda visibly less effective across entire communities.

The impact extends beyond personal protection to systemic delegitimisation. When enough people recognise manipulation techniques, the moral authority that enables technocratic governance begins to collapse. Communities that can decode control language become resistant to manufactured consensus and crisis narratives.

Use Cash and Avoid Digital Payments

Function: Alternative | Scale: Individual | Domain: EconomicCash transactions remain outside surveillance networks and prevent the transaction tracking that enables comprehensive life-pattern analysis. Digital payments create permanent records that feed into ESG scoring, carbon accounting, and behavioral prediction systems. Using cash preserves transaction privacy while demonstrating that alternatives to digital finance remain viable.

This action becomes more powerful as more people adopt it, creating parallel economic circuits that operate outside algorithmic control. It also prevents dependency on systems designed to enable social credit scoring through spending pattern analysis. The network effects are substantial — businesses that serve cash customers gain competitive advantages while digital-only businesses face reduced market access.

The strategy requires minimal individual effort but delivers significant system stress by reducing the data quality needed for predictive modeling and automated behavioral modification. It preserves the infrastructure needed for local exchange systems while maintaining personal economic sovereignty.

Track Expert Prediction Failures

Function: Offensive | Scale: Systemic | Domain: Information/CommunicationCreating systematic records of expert forecasting failures undermines the authority that enables technocratic governance. When epidemiologists, climate modelers, economic advisors, and policy experts make demonstrably false predictions that drive policy, documenting these failures creates permanent accountability records.

Searchable databases showing prediction accuracy versus actual outcomes reveal that expert authority rests on manufactured credibility rather than actual competence. This information becomes politically powerful when politicians cite the same failed experts for new policies, making their reliance on incompetent advice a campaign liability.

The effectiveness compounds over time as the database grows. Failed predictions cannot be memory-holed when systematically documented with timestamps and outcomes. This creates a permanent record that journalists, politicians, and citizens can reference when the same experts promote new interventions based on similar modeling approaches.

Priority 7: Core Strategic Actions

Operate Whistleblower Channels

Function: Defensive | Scale: Community | Domain: Information/CommunicationSecure communication systems for exposing institutional failures while providing legal defense resources. Establish SecureDrop-style platforms with encrypted submission processes and dedicated legal defense funds for protecting sources and enabling document verification.

Build Local Media Networks

Function: Offensive | Scale: Community | Domain: Information/CommunicationCreate information distribution through newsletters, radio programs, and bulletin boards that bypass algorithmic curation and corporate editorial control. Focus on local news, institutional accountability, and community coordination rather than competing with national media.

Document Enforcement Disparities

Function: Offensive | Scale: Community | Domain: Information/CommunicationTrack selective application of rules to expose system bias and create accountability pressure. Record who gets prosecuted versus who receives warnings for similar violations, revealing preferential treatment and double standards in regulatory enforcement.

Tool Libraries and Repair Cafes

Function: Defensive | Scale: Community | Domain: EconomicBuild resource-sharing systems that reduce dependency on platform-mediated commerce. Shared tools, repair workshops, and skill exchanges create community resilience while reducing individual exposure to surveillance-enabled commercial transactions.

Shared Legal/Health Cooperatives

Function: Defensive | Scale: Community | Domain: EconomicPool resources for retaining general practitioners and legal advocates who prioritise patient/client relationships over institutional compliance. Create direct-pay arrangements that avoid insurance surveillance while maintaining professional healthcare and legal services.

Track Community Productive Capacity

Function: Alternative | Scale: Community | Domain: EconomicMaintain local inventories of skills, tools, land, and infrastructure as measurable community assets. Set annual targets for growing productive capacity in each category, creating independence from external supply chains and institutional dependencies.

Skills Registries and Emergency Contacts

Function: Defensive | Scale: Community | Domain: LegalDocument who can provide essential services during system disruptions — welding, repair, medical aid, communication, food production. Create redundant contact networks that function without digital infrastructure during emergencies or institutional failures.

Push Local Cash Acceptance Ordinances

Function: Constitutional | Scale: Community | Domain: LegalRequire local businesses to accept cash for essential transactions, preventing digital-only dependency that enables social credit systems. Focus on food, fuel, medicine, and transportation to ensure basic needs remain accessible without digital compliance.

These twelve strategies form a comprehensive foundation that individuals and communities can implement immediately while building toward longer-term institutional alternatives. They target the system's core dependencies - voluntary compliance, data quality, expert authority, and infrastructure control - while remaining achievable for ordinary citizens without specialised resources or coordination.

All they’re all perfectly legal.

Withdraw consent, build alternatives

The architecture we’ve mapped only works if we feed it. It depends on moralised consent, opaque models, and behavioural data. That means the leverage is ours: withdraw consent intelligently, force transparency, and build what makes the system irrelevant.

And remember: they already have a catalogue of ‘solutions’ on the shelf, waiting for the next ‘crisis’. White-papered, pilot-tested, vendor-ready — rolled out under the banner of ‘resilience’. The doctrine of the meta-crisis converts episodic emergencies into a permanent state of exception, justifying permanent crisis management: Covid-style lockdown logic forever — or whatever the next pretext allegedly demands.

As a practical close:

Pick three quick wins this week: refuse voluntary digital ID, use cash for daily spend, and learn to decode Aesopian control language.

Do one community move this month: pass a cash-acceptance ordinance, start a tool library/repair café, or launch a local newsletter that bypasses algorithmic curation.

Apply the five-question stress test to any policy that touches you; if a single answer is ‘no’ or unclear, do not proceed.

Back one systemic exposure project: track expert prediction failures or support reproducible challenges to high-stakes models.

Power has migrated from ballots to back-ends; our response must move from slogans to systems. Withdraw your consent, redirect your competence, and build the parallel institutions that put human agency above algorithmic optimisation.

The emperor has no clothes, the models don't work, the ethics are manufactured, and the ‘experts’ exceed their legitimate authority.

Once enough people see these contradictions, the entire edifice of manufactured credibility collapses.

The door isn't locked — and the walls aren't even real.