The Executive Summary

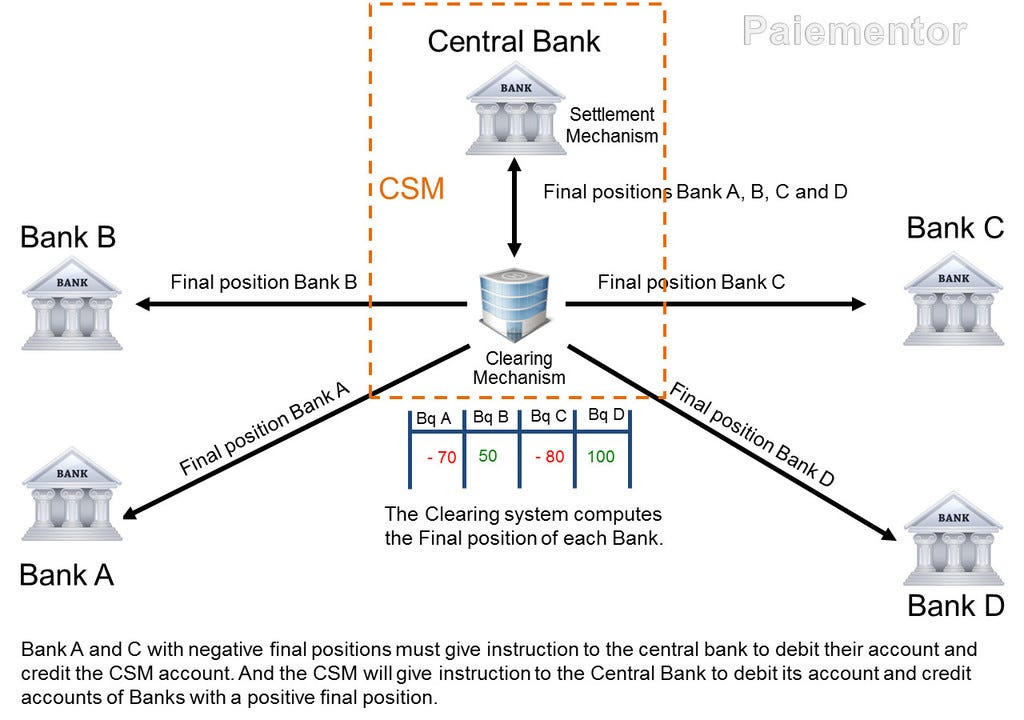

In the 1770s, London’s private banks developed the Bankers’ Clearing House where they could settle their debts to each other in one place rather than chasing payments across the city. The system worked in three steps. First, a standard was agreed — what counted as a valid claim, what counted as settled. Second, each transaction was checked against that standard. Third, the result was executed.

Standard. Clearing. Settlement.

Three steps, always in that order, always flowing downward from definition to verdict to execution.

In 1844, the Bank Charter Act placed the Bank of England above this structure by making it the sole issuer of banknotes. There was now a single institution that decided what counted as money. The clearing houses checked transactions against its standard. Settlement carried out the result. The model was complete: one body to define, one to assess, one to execute.

A century later, when Political and Economic Planning — a body of industrialists, civil servants, and academics known as PEP — published its blueprint for rebuilding British economic life, it examined every major institution in the country and concluded that each one needed radical reform — apart from one. The Bank of England, PEP wrote, already possessed a constitution sufficiently flexible ‘to enable it to adapt itself to filling its place in the new order without requiring any radical changes’.

The Bank of England was already perfect. The task was to copy its architecture into every other domain.

The Fabian Society, founded in 1884, had been working toward exactly this. The Fabians believed society could be reshaped without revolution — gradually, through institutions. Their method was straightforward: get the right people into the right positions, and the policy follows. Get the policy right, and the administration follows.

They built the London School of Economics in 1895 to train the administrators. LSE produced the civil servants, economists, and planners who staffed Whitehall for decades.

For long-range thinking, the Fabians helped establish the Royal Institute of International Affairs in 1920, housed at Chatham House. This became the place where foreign policy was shaped before it reached ministers — a self-selecting group of experts whose analyses defined the options available to elected officials. Governments change, but Chatham House remained.

By the 1930s, these three bodies — the Fabian Society handling politics, LSE handling economics, and Chatham House handling strategy — were running in parallel. PEP was founded in 1931 to bring them together. It was the clearing house where long-range thinking, economic planning, and political action were combined into a single programme.

The same three-step pattern had now been applied to governance: one body does the thinking, a second translates the thinking into practical frameworks, and a third carries out the result.

In 1918, Richard Haldane’s Machinery of Government report had recommended that science be given its own dedicated place in government — separate from day-to-day politics. The thinking was practical: people running departments do not have time to do serious research. Research should be handled by specialists whose sole job is to study problems and advise those making decisions.

The International Research Council was set up the following year and reorganised in 1931 as the International Council of Scientific Unions (ICSU). Science now had its own international governance — self-selecting, operating above national politics, and carrying the weight of objectivity precisely because it appeared to stand outside the political system.

The 1941 British conference on ‘Science and World Order’ argued that scientific planning should directly inform how society is organised. Once scientific outputs could flow into political and economic planning with the stamp of independence attached, they became very difficult to challenge — because challenging them looked like challenging science itself.

In 1961, Robert McNamara brought systems analysis to the Pentagon through PPBS — the Planning, Programming, and Budgeting System. The idea was simple: set clear objectives, measure whether you are hitting them, and allocate resources based on the measurements. When McNamara moved to the World Bank in 1968, he took the same approach with him. Development lending became conditional: a country receives funds only if it meets targets set by the lender. The lender defines the targets, measures progress, and releases or withholds money accordingly.

The environmental route developed through its own set of institutions. The International Union for Conservation of Nature (IUCN), established in 1948, built the first international framework for classifying and managing natural resources. Its work fed into the United Nations Environment Programme (1972), the Brundtland Commission’s definition of sustainable development (1987), the Rio Earth Summit (1992), and eventually the Network for Greening the Financial System and the International Sustainability Standards Board — the bodies whose definitions now determine what counts as environmental compliance in global finance.

The Club of Rome (1968) and the International Institute for Applied Systems Analysis (1972) added computer modelling. ‘The Limits to Growth’, published in 1972, was the first simulation to model the planet as a system of measurable flows — resources consumed, pollution generated, population growing. The model did not just describe the world. It set the terms in which the world would be discussed: carrying capacity, overshoot, sustainability. Those terms became the language of policy.

In 1988, the Intergovernmental Panel on Climate Change made climate modelling the basis of official scientific consensus. The IPCC does not conduct its own research. It reviews and assesses the existing research, and its conclusions are treated by governments and financial regulators as settled. The NGFS replicates this structure one layer closer to the money through its own Scientific Advisory Committee — a self-selecting body that assesses climate-financial risk research and produces outputs the BIS and its member central banks adopt directly into supervisory frameworks. The IPCC clears the science. The NGFS SAC clears the financial application of the science.

The ethical route ran alongside all of this. In 1893, Paul Carus organised the World Parliament of Religions and published ‘The Religion of Science’. His argument was that science and morality are two sides of the same coin — science tells us what is true, and what is true carries moral weight. If the science says something is harmful, then doing it is not merely foolish but wrong. This gave scientific institutions moral authority.

A hundred years later, Hans Kung drafted the ‘Declaration Toward a Global Ethic’ for the 1993 Parliament of the World’s Religions. Kung turned this into a universal moral framework — four principles addressed to every person on earth: non-violence, solidarity, tolerance, and equal rights. The ethic no longer belonged to any one religion. It belonged to all of them, and to science as well.

The Earth Charter (2000), led by Mikhail Gorbachev and Maurice Strong, attached this moral framework directly to the environmental models. The planetary data produced by the Club of Rome, IIASA, and the IPCC now carried moral authority on top of scientific authority. Questioning the models was no longer just a technical disagreement — it was a moral failing.

The surveillance and measurement machinery completed the picture. UNEP’s Global Environment Monitoring System (GEMS) built the data network — continuous observation of the planet, feeding numbers into the models. Without this data layer, the models have nothing to work with. With it, the natural world becomes something that can be continuously tracked, classified, and processed.

In 2015, the United Nations adopted the seventeen Sustainable Development Goals — the SDGs. These turned the ethic into a set of defined targets: what the world should look like, expressed as goals. The 231 SDG indicators turned those goals into numbers — measurable, comparable, and ready to be attached to lending conditions and investment criteria. The ethic had become a metric.

Results-Based Management and Key Performance Indicators provided the enforcement mechanism. This is McNamara’s PPBS applied to development: set a target using SDG indicators, release funds on condition that the target is met, and measure performance with KPIs. ‘Impact’ investing and blended finance turned this into a business model — public money mixed with private, with returns extracted through compliance with SDG indicators. The taxpayer funds the programme, the private investor takes the return. The indicators decide whether the conditions have been met.

The Global Environment Facility’s ‘landscape approach’ and proposed Natural Asset Companies took this further. Forests, wetlands, and watersheds are reclassified as financial assets — valued according to the ecosystem services they provide, placed on a ledger, and governed by the same compliance rules as any other financial instrument. The physical world is pulled into the financial system.

The stranded assets framework completed the picture from the other direction. Fossil fuel reserves and carbon-intensive infrastructure are reclassified as liabilities — not by legislation, but by the standards bodies (ISSB, NGFS) redefining what counts as a sound asset. Once the definition changes, capital moves. The reclassification does not need a vote. It needs only a new standard issued by a committee most voters have never heard of.

The finance route went international in stages. After the Franco-Prussian War of 1870–71, five billion francs in reparations had to be processed between France and Germany. Gerson von Bleichröder handled the German side. Alphonse de Rothschild led the French syndicate that raised the bonds. Private banks were already performing the clearing function between sovereign nations.

In 1886, Alfred de Rothschild proposed a permanent international clearing mechanism at the Brussels monetary conference. In 1892, the economist Julius Wolf published a detailed plan for an international clearing house to settle trade balances between countries. The London model — perfected over a century — was being prepared for global deployment decades before the institution that would house it existed.

That institution arrived in 1930. The Bank for International Settlements was established to process German war reparations under the Young Plan — an international clearing house in a neutral country, settling between sovereigns.

The Bretton Woods Conference of 1944 extended the architecture further. The World Bank and the International Monetary Fund created the framework for conditional lending to entire nations — the same definition-clearing-settlement structure scaled to the sovereign level. The Basel accords, administered by the BIS, extended it to commercial banking — capital requirements enforcing the definitions set at the top.

SWIFT and the ISO 20022 messaging standard gave the system a universal language. When every cross-border payment carries a standardised, machine-readable description of who is paying, why, and to whom, any classification or surveillance layer can be placed on top. Climate was the first application. It will not be the last.

The BIS Innovation Hub, established in 2019, is now assembling all of these threads into a single infrastructure. Its projects — spread across seven centres worldwide — each build a different part of the architecture. Gaia uses artificial intelligence to extract and standardise climate data. Aurora uses network analytics to detect financial crime patterns. Agorá tests tokenised settlement on a programmable platform. Rosalind builds the interface connecting central bank systems to commercial applications. Pine tests how central banks could run monetary policy through smart contracts.

Individually, each project solves a specific technical problem. Together, they form the unified ledger described in the BIS’s 2023 blueprint — a single programmable platform combining digital central bank money, tokenised commercial deposits, and tokenised assets, with compliance conditions built into the settlement layer.

Conditional central bank digital currencies are the final step. When the conditions are embedded in the money itself — when every unit of currency carries the rules of its own use, checked at every transaction, enforced at the moment of payment — the architecture is complete.

The ethic became a standard. The standard became a measurement. The measurement became a condition. The condition became a line of code. And the code runs without asking anyone’s permission.

The thinkers defined the standard. The assessors built the measurements. The administrators enforced the result. And CBDCs will soon carry the entire chain — from the London clearing house to programmable settlement — in every transaction on earth.

Excellent summary, as always. Thank you 🙏

You provide the most thorough commentary I have found anywhere, thank you.